Differences Between Sanity And Smoke Testing

In the software industry, smoke testing and sanity testing are different yet crucial methodologies used in different stages of the software development lifecycle.

Smoke testing, also known as build verification testing, is performed to verify the basic functionality of a software application or system after a build or release. It’s a broad and shallow check that ensures the build is stable enough for further testing.

On the other hand, sanity testing is performed after receiving a software build with minor changes in code or functionality. It’s a narrow and deep check that verifies that the changes made to the application have not introduced new defects or issues in the specific functionality or components.

In this article, we will examine sanity and smoke testing, when to use them, the differences between them, and best practices for using both types of testing at different stages.

What is Sanity Testing?

Sanity testing is a type of software testing conducted after smoke testing. The purpose of sanity testing is to ensure that no new defects are introduced when minor changes are made to software functionality or features.

Sanity testing is essential for several reasons, including:

- Risk Mitigation: A sanity test can quickly identify critical issues or regressions introduced by minor changes. As a result, software updates and modifications are less likely to cause problems.

- Time Efficiency: A sanity test is a lightweight process that provides rapid feedback. It ensures that the application behaves as expected without investing a lot of time in exhaustive testing.

- Progress Tracking: Sanity tests offer a snapshot of the application’s health after specific changes. As a result, teams can monitor progress during development cycles and make informed decisions.

- Quality Assurance: Sanity tests verify that small changes do not break existing functionality. Sanity testing acts as a safety net to catch unexpected issues.

Sanity Testing Example

Imagine you’re working on a simple e-commerce web application. The application has several modules, including a login page, home page, user profile page, and user registration. During development, the team identified a defect in the login page: the password field accepts less than five alphanumeric characters, contrary to the requirement that it should not be below eight characters.

We want to ensure that the recent fix for the password field doesn’t introduce any new issues. We’ll specifically check the login page functionality. Now, let’s apply sanity testing to this scenario:

Test Steps:

- Enter valid credentials (username and password).

- Verify that the password field now correctly enforces the minimum length requirement.

- Confirm that the login process works as expected.

Expected Outcome: The login page should function correctly without any issues related to the password field.

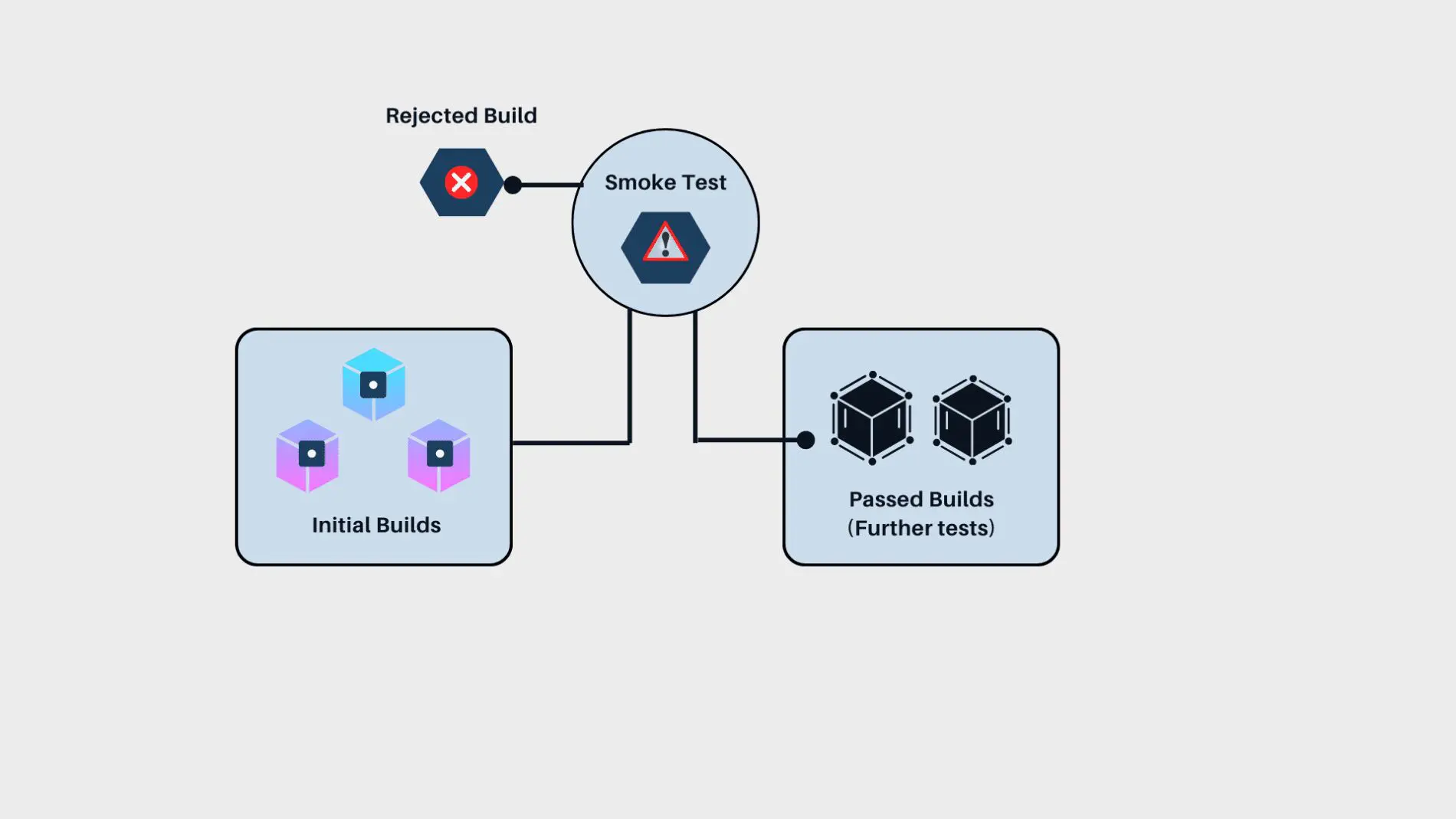

What is Smoke Testing

Smoke testing, also called build verification testing or build acceptance testing, occurs at the beginning of the development process. This test ensures that a software application's most critical functions perform as intended. Smoke tests aim to identify and fix major issues with the software before more detailed testing can begin.

Smoke Testing Example

Imagine you’re working on an online shopping platform. A new build has been deployed with updates to the product catalog, search functionality, and checkout process. Before conducting extensive testing, a smoke test is conducted to ensure the critical features work as expected. Test cases for this scenario are as follows:

- User Registration and Login:

- Verify that users can successfully register and log in.

- Check if login credentials are validated correctly.

- Product Catalog and Search:

- Confirm that the product catalog displays items accurately.

- Test the search functionality by looking up specific products.

- Cart and Checkout:

- Add items to the cart and proceed to checkout.

- Ensure the checkout process works smoothly, including payment methods and order confirmation.

When the smoke test is passed, these core functionalities indicate that the software is stable enough for further testing. Smoke testing prevents critical problems from progressing further.

Smoke Vs. Sanity Testing

When to Use Sanity Vs Smoke Testing

The project's specific needs and context determine the choice between sanity testing and smoke testing. This section aims to provide a detailed understanding of the key differences between these two testing methodologies, their roles within the software development lifecycle, and the conditions in which they can be most effectively employed.

Breadth of Testing Scope vs. Depth of Testing

When it comes to the breadth and depth of testing, sanity testing is used when the scope is narrower and the coverage area is small. It deeply examines specific areas after changes, such as bug fixes, ensuring that new or updated features work smoothly with existing processes.

On the other hand, smoke testing covers a wider range of functionality, usually right after a feature is developed. It spans a broader scope and is often deployed to check an application’s basic functionalities across all relevant platforms.

This initial testing ensures that the primary functions operate correctly before further detailed tests are undertaken, making it crucial for the early detection of significant issues.

Regression Testing Vs Acceptance Testing

Sanity testing acts as a selective regression testing tool. It checks only critical functionalities after minor updates or fixes, focusing on specific components rather than the entire system. This approach efficiently confirms that recent changes do not adversely affect existing functionalities.

Conversely, smoke testing is a preliminary acceptance test to verify that a software build meets the essential criteria for further comprehensive testing. It ensures that the fundamental operations needed for deeper feature testing are functional, thus setting the stage for full-scale acceptance tests.

Release Decision Vs. Test Decision

Sanity testing is crucial for making release decisions. It determines whether a build is stable enough for production after minor fixes or updates. A failed sanity test indicates critical issues, suggesting that the build should be withheld from release and returned for further development to avoid potential operational disruptions.

Smoke testing, on the other hand, guides the test decision process. It checks basic functionalities and whether a build is prepared for detailed testing. If a smoke test fails, it indicates underlying problems, suggesting that further testing is inadvisable until these issues are resolved, thus preventing inefficient allocation of testing resources.

Stable Build Vs. Unstable Build

Sanity testing is typically applied to stable builds where specific, often minor, changes have been made. It’s used when the application's overall stability isn’t in question but needs confirmation that recent adjustments haven’t introduced new issues.

Smoke testing, however, is useful for evaluating the stability of all builds, regardless of the extent of changes. It is an essential first step in the testing cycle for every new build, ensuring the software is fundamentally functional before further tests are done.

Scripted vs. Unscripted

Both sanity and smoke tests are often scripted to provide consistency and efficiency. Sanity tests are derived from more comprehensive regression tests and are usually automated to verify fixed functionalities swiftly.

Smoke tests, which cover broader aspects of the system, also benefit from automation, allowing for quick assessment of the build's readiness for subsequent testing phases.

Sanity Testing Best Practices

The following best practices are intended to provide insight into how to conduct Sanity Testing effectively and thus ensure the efficient functioning of specific features or components in a software application.

Understanding the Scope

Sanity testing begins with a clear understanding of the scope. This involves identifying the critical functionalities of the application that need testing. For instance, in an e-commerce website, the checkout process could be a key functionality.

Focusing on these critical areas is important, considering factors like business impact, user experience, and regulatory compliance. However, it’s equally important to avoid scope creep and stick to the essential features to maintain efficiency.

Planning

Once the scope is defined, a clear sanity test plan should be crafted. This plan outlines the testing objectives, the functionalities to be tested, and the testing approach. It should be concise, focused on the main functionalities, and flexible enough to accommodate any changes in the application.

The planning stage also involves prioritizing risk-based test cases, with high-risk areas such as payment processing or security features being tested first. Additionally, focus should be placed on regression testing of critical user flows or scenarios, which cover the most common interactions and are crucial for ensuring overall system stability.

Using Appropriate Tools

Appropriate tools can improve the efficiency of sanity testing.Automated testing can execute repetitive tasks, freeing time for more complex tests. Bug tracking tools can manage and track any issues during testing, while test management tools can organize and manage the testing process.

It’s important to choose automation tools that align with your application’s technology stack, such as Selenium, JUnit, or Cypress for web applications and Appium for mobile apps.

Documentation

Documentation plays a crucial role in sanity testing. It involves maintaining a test log or using a test management tool to record test results and documenting any minor issues found during the test.

This helps track progress, ensure transparency, and provide a record of the testing for future reference or to understand the project's history.

Final Thoughts

Both sanity and smoke testing serve unique purposes and are used at different stages of the process to ensure the quality and functionality of the software. While sanity testing is used to check specific functionalities after minor changes, smoke testing is performed to verify the basic functionality of the entire system after a new build or release.

When it comes to ensuring the quality of your software, having a reliable partner can make all the difference. Testlio is a leading QA software testing company that can help you navigate the complexities of sanity and smoke testing. With our industry-leading expertise, you can ensure that your software meets the highest quality and functionality standards.